Autonomous Flying in a Swarm BionicBee - ultralight flying object with precise control

With the BionicBee, the Bionic Learning Network has now for the first time developed a flying object that can fly in large numbers and completely autonomously in a swarm. The BionicBee will present its first flight show at the Hannover Messe 2024.

ZIMMER GROUP'S NEW ZIMO FLEXIBLE ROBOT CELL SMOOTHS THE PATH TO AUTOMATION

There are few who would argue that the combination of labor and skills shortages, together with the drive to attain greater levels of productivity, means that automation is now becoming an essential part of manufacturing for a greater number of businesses, especially SMEs.

The most compact Motion Control System on the market

FAULHABER is introducing a new Motion Control System. More precisely: The world's smallest Integrated Motion Controller.

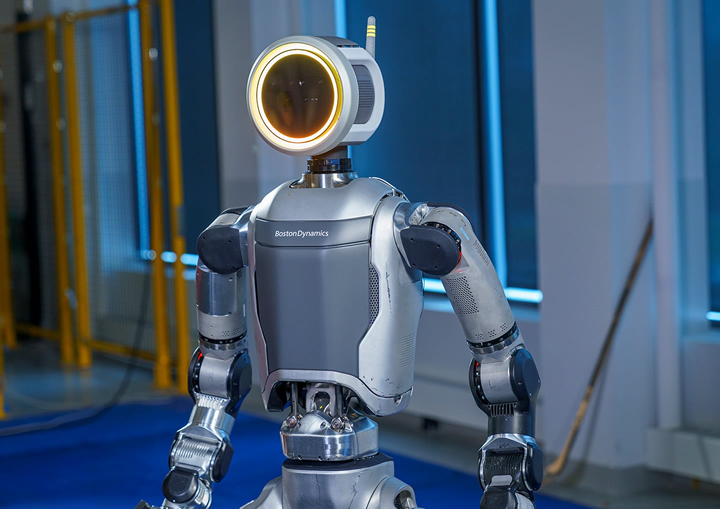

An Electric New Era for Atlas

Our new electric Atlas platform is here. Supported by decades of visionary robotics innovation and years of practical experience, Boston Dynamics is tackling the next commercial frontier.

XPONENTIAL 2024 Launches Next Week in San Diego

Pertinent keynotes, defense programming, international participation, and a start-up pavilion will move the autonomous and robotics industry forward

Are Labeling and Palletizing Robots the Secret Weapon Manufacturers Need?

Robots are not magic fixes to all manufacturing industry obstacles, but they can allow users to overcome many challenges when implemented thoughtfully.

Amazon announces over €700 million investment in robotics and AI powered technologies across Europe

Amazon is using its Innovation Lab, home to a diverse team of scientists and engineers from all over the world, to develop and test new technologies to better support employees and deliver for customers.

Shibaura Machine to unveil new SCARA features at Automate 2024

Visitors will be able to see the two newest models in the THE range, the THE800 and THE1000, in action at the show at its booth managed by TM Robotics, its distribution partner in North, Central and South America,.

How Small Manufacturers are Building a Business Case for Robotics

Smaller manufacturers are the fastest-growing area of industrial robotics today, driven by the need for new collaborative robotics systems.

Mouser Electronics Empowers Next Generation of Engineers by Sponsoring FIRST Robotics Competition for Youth

Mouser will be a major sponsor at the upcoming FIRST® in Texas/UIL State Robotics Championships, occurring April 3-6, and the FIRST Championships, on April 17-20, at the George R. Brown Convention Center in Houston, Texas.

Factories of the future - What will manufacturing facilities look like in 2044

The landscape of manufacturing is set to evolve dramatically over the next two decades, as cutting-edge technologies redefine the way we produce goods. To envision what the future may look like, we do not need to rely solely on idle speculation.

Why Customer Service Robots Need Ethical Decision-Making: Trust and Benefits for Businesses

The integration of moral robots in customer service does more than just streamline operations; it fundamentally alters the relationship between businesses and their customers.

NVIDIA Announces Project GR00T Foundation Model for Humanoid Robots and Major Isaac Robotics Platform Update

Isaac Robotics Platform Now Provides Developers New Robot Training Simulator, Jetson Thor Robot Computer, Generative AI Foundation Models, and CUDA-Accelerated Perception and Manipulation Libraries

ABB unveils its innovative mobile robot with Visual SLAM AI-technology and AMR Studio® Suite

Equipped with Visual SLAM, ABB's AMR T702 trolley robot offers increased speed, accuracy and autonomy in navigation and logistics. Integration with ABB's AMR Studio software allows new users to easily configure AMR routes and jobs.

Apptronik and Mercedes-Benz Enter Commercial Agreement That Will Pilot Apptronik's Apollo Humanoid Robot in Mercedes-Benz Manufacturing Facilities

The partnership represents Apptronik's first publicly announced commercial deployment of Apollo and the first application of humanoid robotics for Mercedes-Benz

Records 1 to 15 of 1463

Featured Product

Destaco's PNEUMATIC PARALLEL GRIPPERS - RTH & RDH

Destaco's Robohand RDH/RTH Series 2 and 3 jaw parallel grippers have a shielded design that deflects chips and other particulate for a more reliable, repeatable operation in part gripping applications ranging from the small and lightweight, to the large and heavy. RDH Series of Rugged, Multi-Purpose Parallel Grippers for Heavy Parts - Designed for high particulate application environments, automotive engine block, gantry systems, and ideal for heavy part gripping The series includes eight sizes for small lightweight to large/heavy part gripping. RTH Series of Powerful, Multi-Purpose Parallel Grippers for Heavy Parts - Designed for large round shaped parts, automotive engine block and gantry systems, and heavy part gripping. They're available in eight sizes for small lightweight to large and heavy part gripping.

.jpg)

.jpg)